Wearable Acoustic Sensor Array System Featuring Remote Transmission of Body Sounds

—Toward “Tele-auscultation” and Visualization of Physical States based on Body Sounds—

Nippon Telegraph and Telephone Corporation (Head Office: Chiyoda Ward, Tokyo; President and Representative Director: Jun Sawada; referred to below as “NTT”) has developed a wearable acoustic sensor array system for listening to sounds from various parts of the human body using multiple acoustic sensors and remotely transmitting collected acoustical signals over a wireless network.

The developed system consists of an examination vest equipped with multi-channel acoustic sensors, a transmitter, and a receiver. By putting on the examination vest, the acoustic sensors collect sounds from various parts of the subject’s body and the transmitter wirelessly sends those sounds to a remotely located receiver. A user can operate the receiver to listen to or record sounds coming from various locations in the subject’s body.

When this system comes into practical use for medical care, a medical practitioner will be able to listen to sounds from various locations on the patient’s body without having to make direct physical contact with the patient or use of a traditional stethoscope, which will be useful in online medical examinations. This system can also simultaneously collect and record sounds from various parts of the body at high quality, so it is expected to play a role in the research and development of new medical techniques such as the visualization of physical states based on 3D body sounds.

This research was introduced at NTT R&D Forum 2020 Connect held from November 17 – 20, 2020.

=> Molecular and Bio Science Research Group

Background

A variety of sounds arise from a person’s body (body sounds) including cardiac and pulmonary sounds. Determining a person’s physical state by listening to these sounds is called “auscultation,” and is commonly performed using a traditional stethoscope. Auscultation has been used at clinics and hospitals as part of basic health examinations and medical care for hundreds of years.

Auscultation has a number of advantages as a medical diagnostic technique. For example, it is a non-invasive procedure that can be performed repeatedly. It can provide results immediately on-site. And it can be performed at home, or at nursing homes and assisted-living facilities, without the need for large-scale instrumentation. On the other hand, auscultation must be performed on a one-to-one, direct-contact basis between the patient and medical practitioner, which makes it difficult to perform auscultation in the era of an infectious disease such as COVID-19. Even the use of electronic stethoscopes in online medical examinations typically involves close contact with an operator. While it is possible for a patient to apply a stethoscope to their own body, auscultation performed by a non-healthcare professional may be inconvenient, difficult and/or inaccurate in terms of stethoscope positioning, etc. In addition, an increase in the number of elderly patients raises concerns that even heavier burden will be placed on medical specialists that have the requisite skills to properly perform auscultation.

System overview

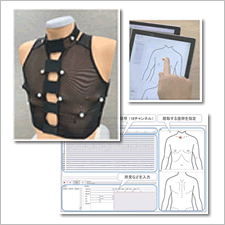

This system consists of an examination vest, a transmitter, and a receiver. The inner side of the examination vest is equipped with many acoustic sensors. Once a person selected for auscultation puts on the examination vest, each of these sensors captures sounds at a different location on the body (Fig. 1). The system transmits these sounds to a remotely located receiver via a wireless network.

The receiver (running on a laptop or tablet) displays a diagram of a human body on the screen. The user can touch or mouse-point to a particular spot on the diagram to listen to sounds from the desired location on the patient’s torso, neck, etc. (Fig. 2). (Sounds from location falling between physical acoustic sensors are automatically synthesized from the signals of multiple sensors.) The receiver also displays the acoustic signal waveform obtained from each sensor independently, so the user can select a particular waveform to listen directly to that sound (Fig. 3). All of these received acoustic signals can be recorded and played back.

This system uses many sensors to comprehensively obtain acoustic signals arising from within the human body. The current prototype simultaneously collects 18 acoustic channels and 1 electrocardiogram (ECG) channel. In addition to the frequency range commonly used in conventional auscultation, this system is designed to capture other frequency bands in order to acquire richer multi-dimensional information about the patient.

The system has been researched and developed based on NTT’s Medical and Health Vision*.

Application to tele-auscultation

This system is designed to make tele-auscultation (remote listening to the body via network) feasible for practical medical care in many cases. The examination vest used in conjunction with a tablet or laptop will enable remote collection of valuable diagnostic acoustic data during an interactive video conversation between patient and medical doctor/nurse. All without close physical contact.

Application to AI-auscultation (analysis of interior physical conditions based on sound)

NTT is promoting the research of “AI-auscultation” for analyzing a person’s internal physical condition based on body sounds. This includes technology for describing physical conditions in text form based on body sounds. This technology is based on a “sequence-to-sequence model” that converts a time series of acoustic signals into a sequence of words. In this way, we can expect AI-auscultation to generate text at a specified level of detail that includes not only classification-related information such as the presence/absence of an abnormality or the name of a disease, but also information related to changes, locations, and the extent of a suspected abnormality, etc. (Fig. 4).

NTT is also researching the visualization of physical states based on body sounds as another aspect of AI-auscultation. It is well known that a skilled medical specialist can form detailed mental images of a patient’s internal body structure and functions by performing auscultation with a traditional stethoscope. Applying AI to the sounds collected by the 3D array of acoustic sensors in the examination vest may help determining and visualizing physical states inside the patient’s body. To date, NTT researches have successfully estimated cardiac states from the inputs of cardiac sounds and used those estimated states to generate a moving image that estimates the heart’s three-dimensional shape based on the patient’s MRI images accumulated separately (Fig. 5).

In this and other ways, multi-channel acoustic information captured by this system can be combined with other types of patient information gathered using other sensing modalities to generate user-friendly representations such as written text or moving images. This system might also find use as a source of big data about human physiology for other aspects of medical machine learning and related AI research.

Future outlook

Going forward, we plan to improve this system in terms of ease-of-use and ease-of-listening in order to implement as quickly as possible a practical “tele-stethoscope” that can help with early detection and prevention of illnesses as part of routine medical care in the tele-health era. We also plan to expand our research on technologies for graphically showing body locations suspected of abnormalities based on body sounds and their changes during everyday life. We will also continue development of techniques for expressing the meaning of those sounds in text form. Our overall aim is to use these technologies to provide a person with an enhanced sense of well-being through non-invasive remote networked health management support that includes quantitative evaluations using prediction/preventive indices based on AI modeling of the human body and big data collected by wearable sensors.

Glossary

- * ... Medical and Health Vision

- NTT’s Medical and Health Vision reflects its decision to contribute to the future of medical care so that people can stay healthy and maintain an optimistic view of the future. The idea here is to achieve a “Bio Digital Twin” (BDT) that maps an individual’s physical and mental characteristics in detail through Digital Twin Computing, a key element of NTT’s Innovative Optical and Wireless Network (IOWN), and to use the BDT to predict the individual’s future physical and mental states.